ADOBE

Your next Photoshop edit might start with “remove that guy”

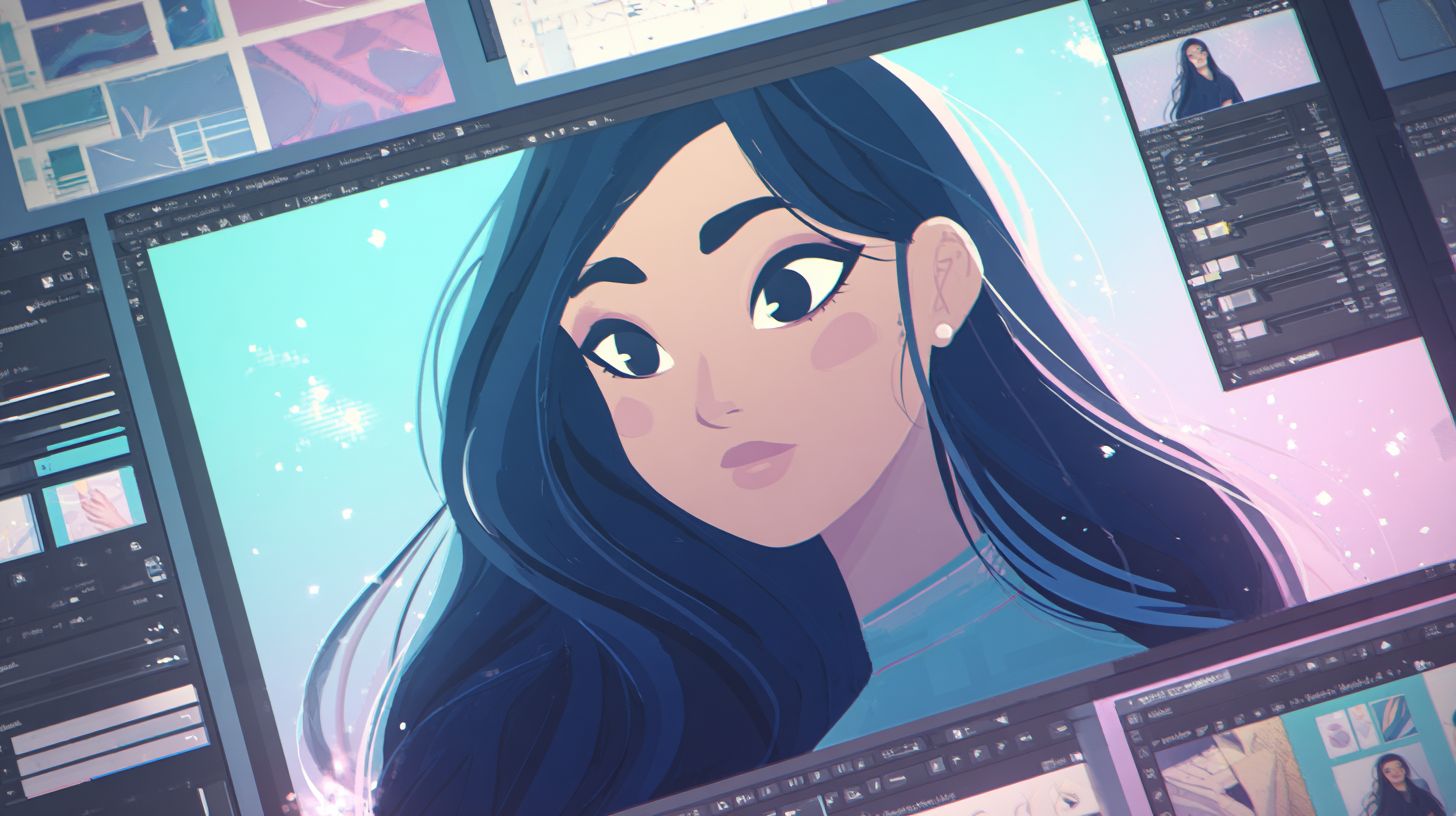

Adobe has started rolling out its AI assistant for Photoshop in beta across the web and mobile apps.

It’s also adding new AI image-editing tools to Firefly, its media generation and editing platform.

The Photoshop assistant, first announced at Adobe MAX in October, lets users edit images using natural language prompts.

That includes tasks like removing people or objects, changing colours, adjusting lighting, adding effects like a soft glow, improving shadows, cropping to a specific format, or changing the background.

Adobe says paid Photoshop users will get unlimited AI generations through April 9, while free users will start with 20 generations.

The company is also launching a public beta feature called AI markup.

This allows users to draw simple markers on an image and use the assistant to edit those areas.

For example, they can sketch a flower to add it into a scene or mark an object they want removed.

Here’s what you should know:

Photoshop’s AI assistant is now reaching users on web and mobile in beta.

Firefly is getting a wider set of editing tools, including fill, remove, expand, upscale, and background removal.

Adobe is also opening access to a growing mix of third-party AI models inside Firefly.

Doodle to edit

At the same time, Adobe is expanding Firefly with more editing tools.

These include Generative Fill for adding or replacing objects, Generative Remove for deleting elements, Generative Expand for extending image size, Generative Upscale for improving resolution, and a one-click background removal tool.

Adobe said in February that Firefly subscribers would get unlimited generations, as it looks to drive wider use of the platform.

Firefly also now includes more than 25 third-party image and video models, including tools from Google, OpenAI, Runway, and Black Forest Labs.

Photoshop, make me even more stunning. *Error, no changes possible* - MV